The rapid expansion of artificial intelligence computing is running into a critical bottleneck: memory supply. As U.S. technology companies push to build more powerful AI systems, their ability to scale may increasingly depend on a small group of suppliers based in South Korea.

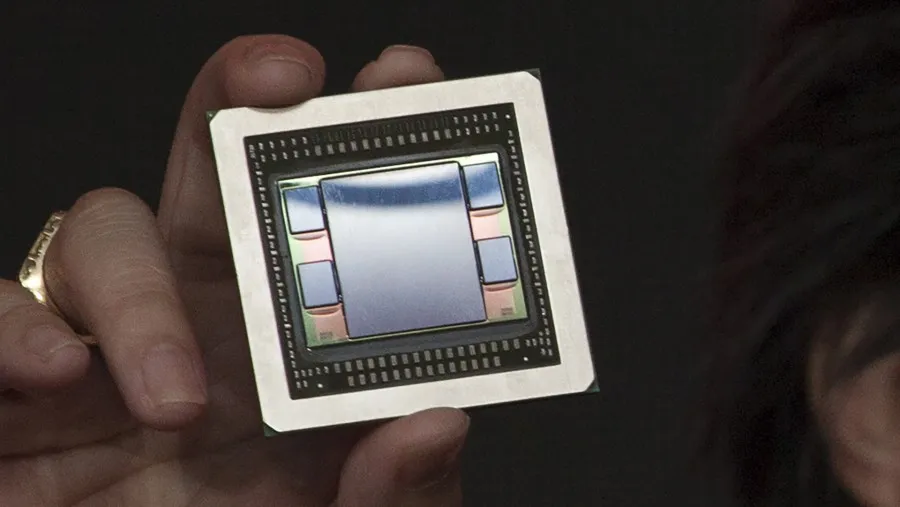

Samsung Electronics, a South Korean technology company and one of the world’s largest memory chipmakers, and SK hynix, a South Korean semiconductor manufacturer specializing in advanced memory, are accelerating development of HBM4E, the next generation of high-bandwidth memory designed to handle the data-intensive demands of advanced AI chips. Alongside Micron Technology, a U.S.-based memory chipmaker, the companies are competing to secure early leadership in a market where supply is already struggling to keep pace with demand.

The urgency is being driven by U.S. chip designers including NVIDIA, AMD and Google, which are preparing next-generation processors expected to incorporate HBM4E as early as next year. These chips are central to training and running increasingly complex AI models, where memory capacity and speed have become as critical as processing power itself.

NVIDIA’s upcoming AI accelerator, known as Vera Rubin Ultra, is expected to target memory capacity of around 1 terabyte using HBM4E, a sharp increase from the 288 gigabytes supported in its current architecture. The jump underscores how quickly memory requirements are expanding as AI workloads scale.

Yet supply constraints show little sign of easing. SK hynix said recently that customer demand for high-bandwidth memory already exceeds its production capacity by a wide margin and is expected to remain elevated for years, pointing to a prolonged imbalance in the market.

That dynamic is intensifying competition among suppliers. Samsung Electronics is preparing to deliver early HBM4E samples to major clients, aiming to build on its existing manufacturing base and introduce hybrid bonding technology to improve performance. SK hynix plans to follow with its own samples later this year, shifting to a more advanced DRAM process and exploring the use of cutting-edge base dies produced by TSMC.

Micron is also developing HBM4E products with similar advanced processes, targeting mass production in the second half of next year.

As AI systems grow larger and more complex, control over high-performance memory is emerging as a strategic chokepoint in the semiconductor industry. For U.S. technology companies, the pace of AI development may hinge not only on chip design, but on how quickly their overseas memory partners can expand supply.